Also on day 2 of Build there were some cool talks and announcements. Info that still belonged to yesterday’s day, I have already added in the other post.

SQL server news

The session of the build that I personally liked the most was the session from the SQL Server team “Modernize your applications with new innovations across SQL Server 2022 and Azure SQL“.

I have been a fan of SQL server for a long time. In my daily work, the direct work unfortunately comes too short thanks to various framework abstractions, but the magic with which the SQL Server team always squeezes out more performance and features is just great. Whereas a few years ago you could still hear assistance systems in SQL Server helping with the optimization of SQL queries, there was already a flag in the subsequent version to let these optimizations be carried out automatically without interactions.

How it can look concretely when the search logic is adjusted based on historical information, you can follow in the above video from minute 11.

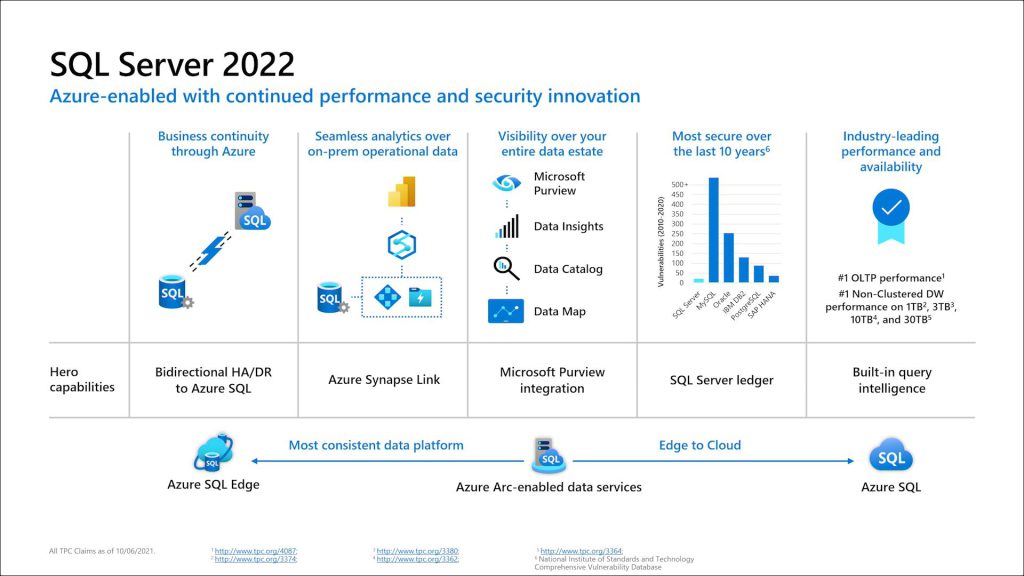

The above presentation also announced SQL Server 2022 and its public preview release. Besides comprehensive integration into the Microsoft Eco system, some new features were highlighted:

Sql Server Ledger (public preview)

The SQL Server Ledger, which brings an AppendOnly behavior to mimic blockchain behavior. One of the goals here is to ensure that data cannot be subsequently modified, so that all clients of the database can rely on its data integrity and quality. Rows cannot be updated and deleted rows are kept in a historical table. To then really protect the database from changes, a summary of the last entry is also kept in a separate database in Azure.

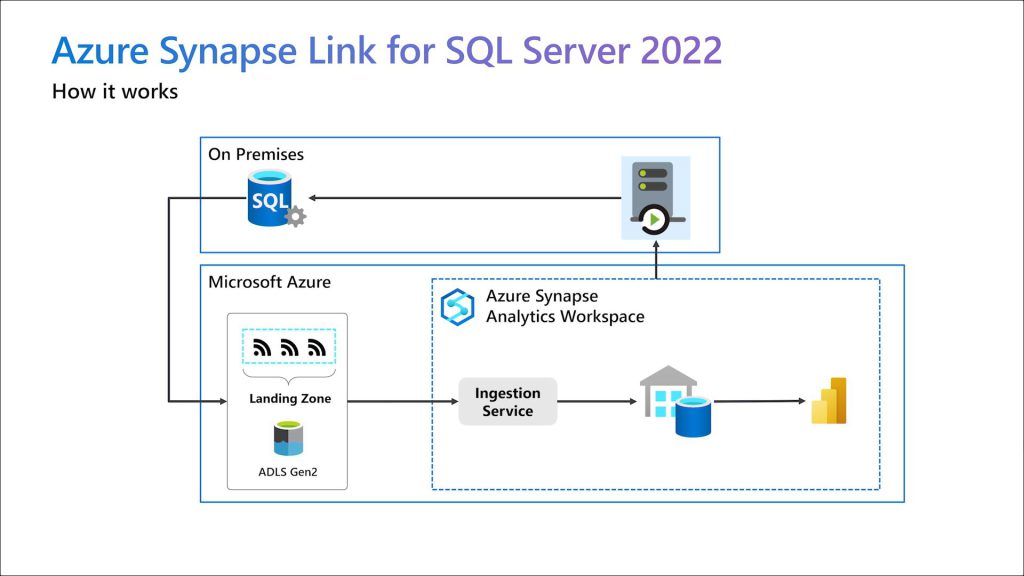

Azure Synapse Link for SQL

With the Azure Synapse Link, SQL Server data can be made available to Synapse in the shortest possible time. The data is synchronized once into an Azure Datalake and then kept up to date using the SQL Server transaction logs.

Azure functions SQL bindings

Developers can now specify a query to be executed against Azure SQL in the Azure functions parameter. This means that data can be provided directly when it is called, without any additional code, and the developer can concentrate on processing.

REST Service calls directly from Sql (private preview)

With the stored procedure “sp_invoke_external_rest_endpoint” it will be possible in the future to fire web service requests directly from the SQL statement. This can be used e.g. to inform a service when a record has just been inserted without it having to listen.

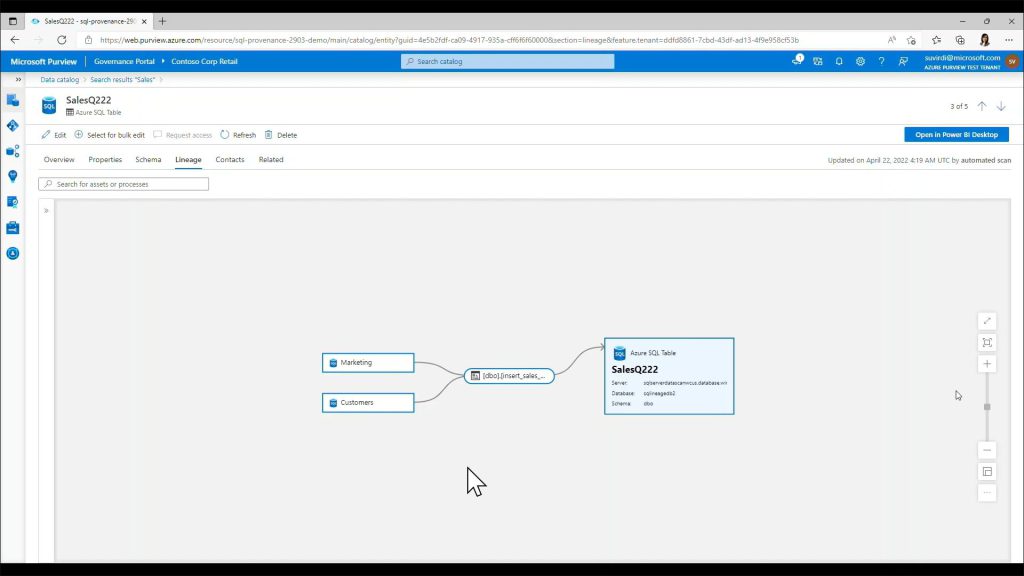

Dynamic Lineage

Another feature of Azure Purview was also introduced. If you enable Dynamic Lineage, you can be queried in tables in Purview for the origin of the data in addition to the contacts and the schema of the tables. The Lineage feature itself seems to have been around for a while – unfortunately I lack some insight into Purview – but one more reason to get involved with this cool tool.

Microsoft Intelligent Data Platform

By the way, if you hear about the “Microsoft Intelligent Data Platform” and ask yourself what you can concretely imagine under it? That’s the term Microsoft uses to group together the tools and features mentioned above, highlighting the powerful combination of each tool. Everything around the tools SQL Server, Azure SQL, Cosmos DB, Synapse, Power BI, Purview and Azure AI, i.e. databases, analytics and governance, should play out their strengths in such a way that they can get the best out of your data – more information here.

Power*

Power Pages

“Power Pages” was introduced as a new member of the Power Platform. This is obviously a further development of the “Power Portals”, which are intended for the general public. Particularly emphasized is the so-called “Design Studio” with which you can easily choose from ready-made page layouts and customize them according to your wishes. As far as I can see, the Design Studio removes the design from the previous, rather dull, Power Platform interface without losing its features.

The use to the other Power* platforms is therefore still given.

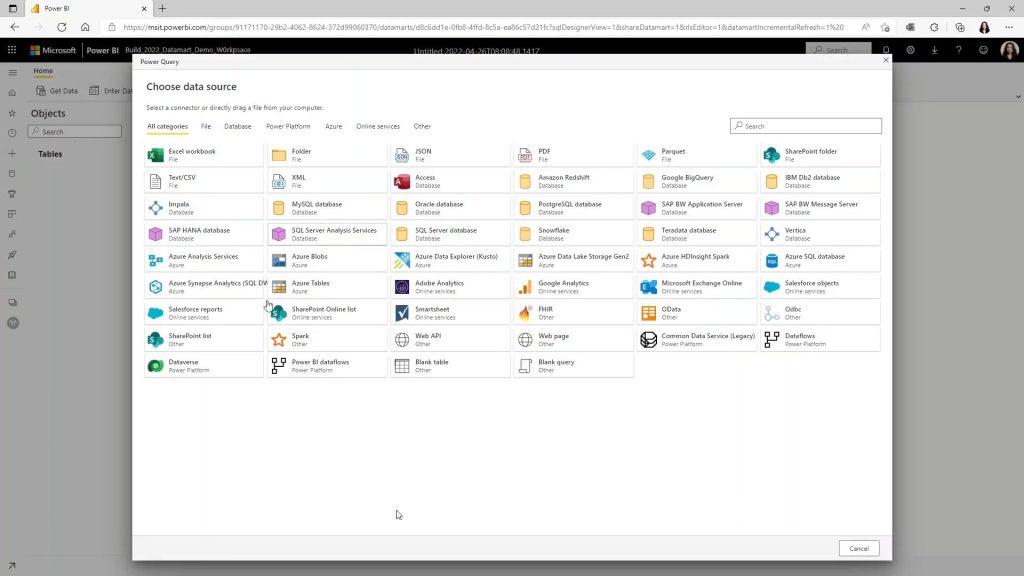

Power BI Datamart

A very powerful feature that many colleagues at Macaw have been looking forward to: Power BI Datamart (available with the Premium license) allows easy import of data from various sources into a data warehouse that Power BI (and other tools, such as SSMS) can then access, and allows easy processing of the data using PowerQuery. The whole thing can also take place in the browser, so that no additional software is required. In conjunction with the Synapse Link already mentioned above, one barrier after another falls here to not start directly with their own data and data analysis.

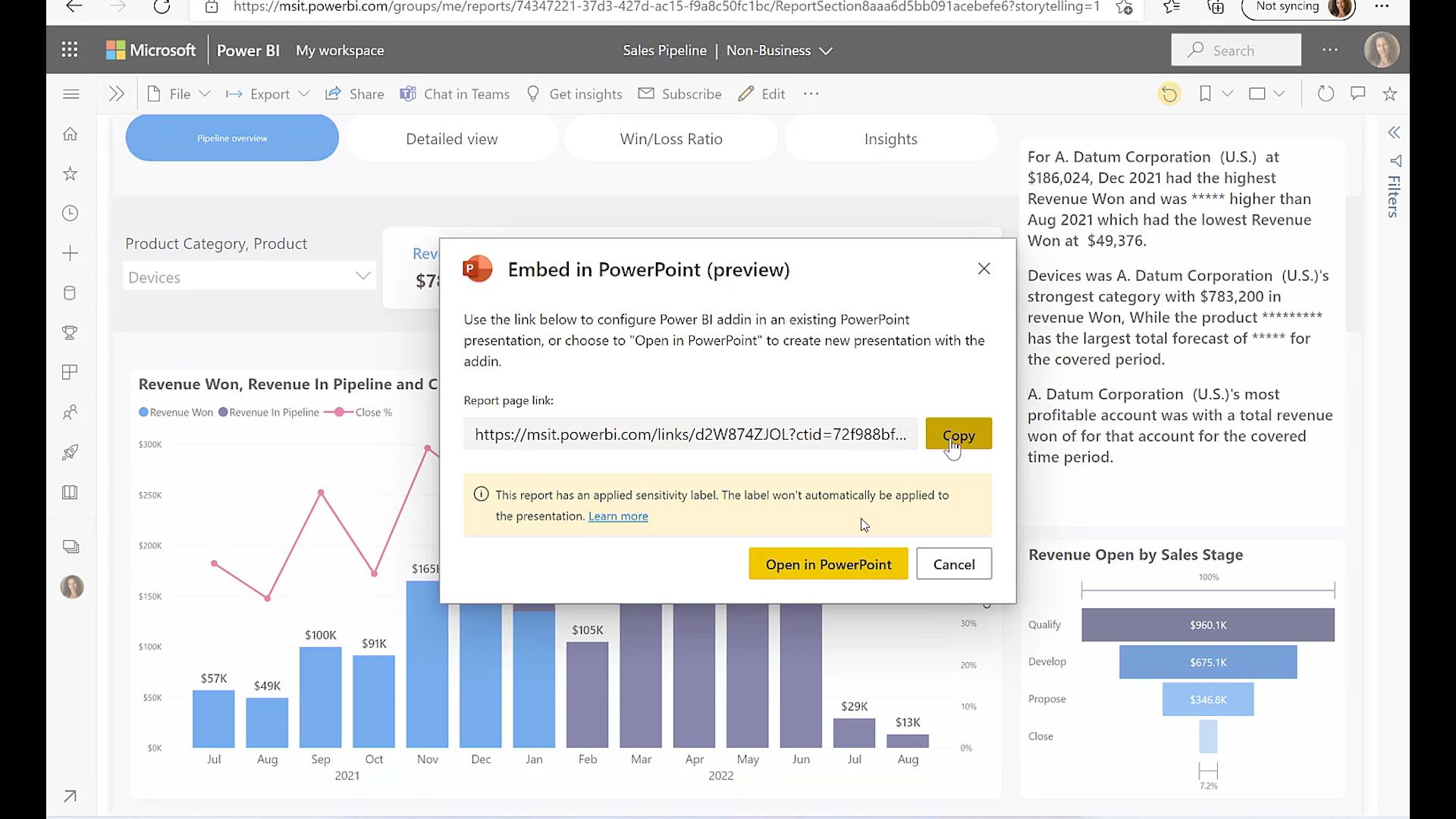

Power … Point

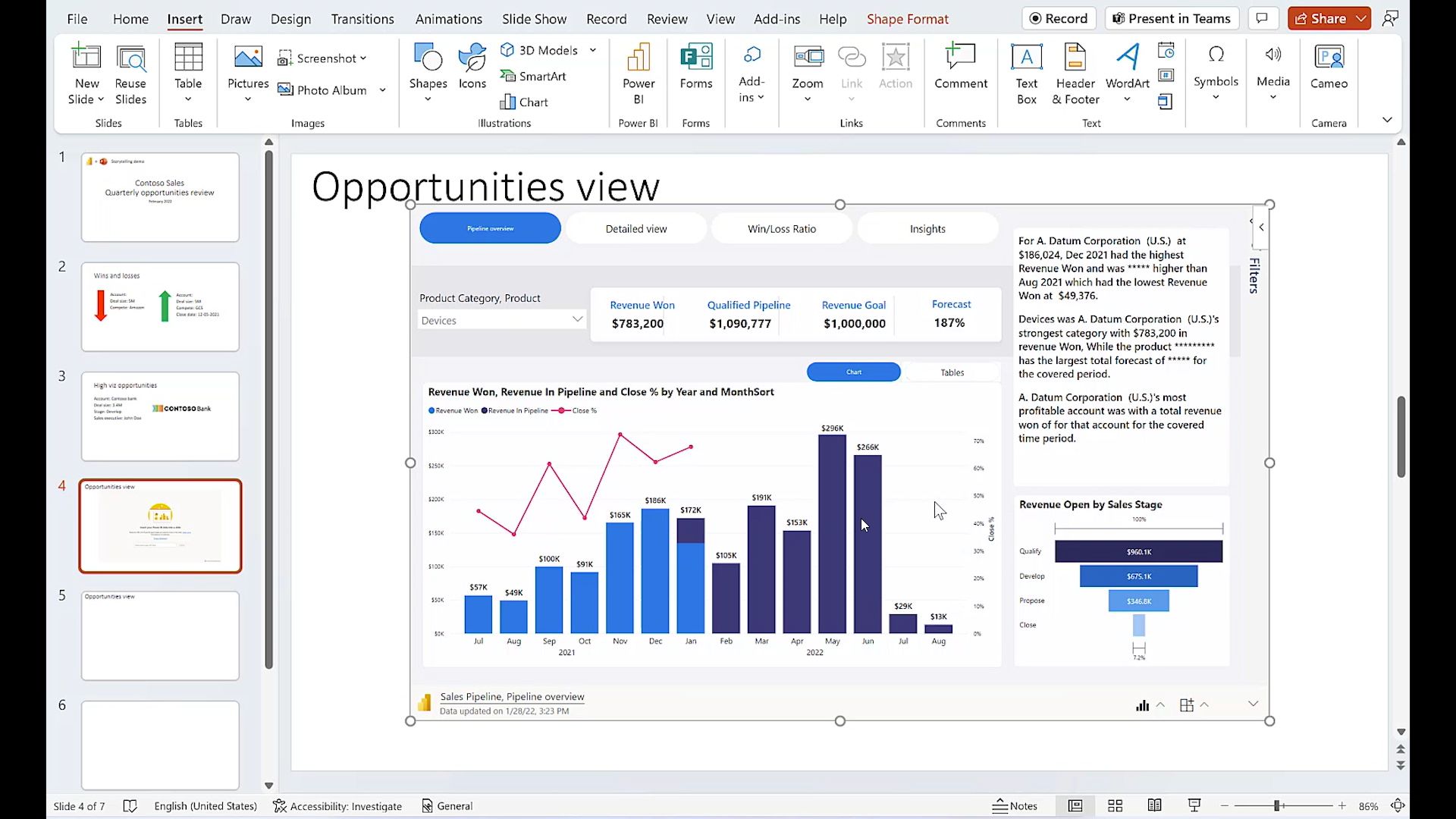

Ok – this might be a bit unexpected, because PowerPoint has not much to do with the PowerPlatform, except for the name. BUT from various business reports and reports it is known that managers repeatedly open their Power BI reports, screenshot or link and then use these static copies in their presentations. Now there is the Power BI Addon for Powerpoint, so you can place a report DIRECTLY on a Powerpoint slide. You can then update this data with one click and even live the component on the slide. This should make the one or other boring report much more interesting.

Fluid Framework

By the way, my coolest experience on day 2 was: I dialed into the Fluid Framework roundtable meeting. I had 1-2 questions about the loop components and thought I could bring them up here. Not only that the who is who of the Fluid team was sitting here – the 3-4 other participants were among others the colleagues from Hexagon, who had the teams demo in the keynote. Was a very pleasant round with good insights on how it works and plans.

Since the roundtables are no longer publicly viewable, I don’t know what I can and should tell about here – however, I already added the link to the Live Share SDK I heard about in this meeting in Day 1. Thanks again for your time!

If you want to see for yourself how the Fluid Framework (which, by the way, only describes the sync part and not the loop components), this session provides a good summary.

AI

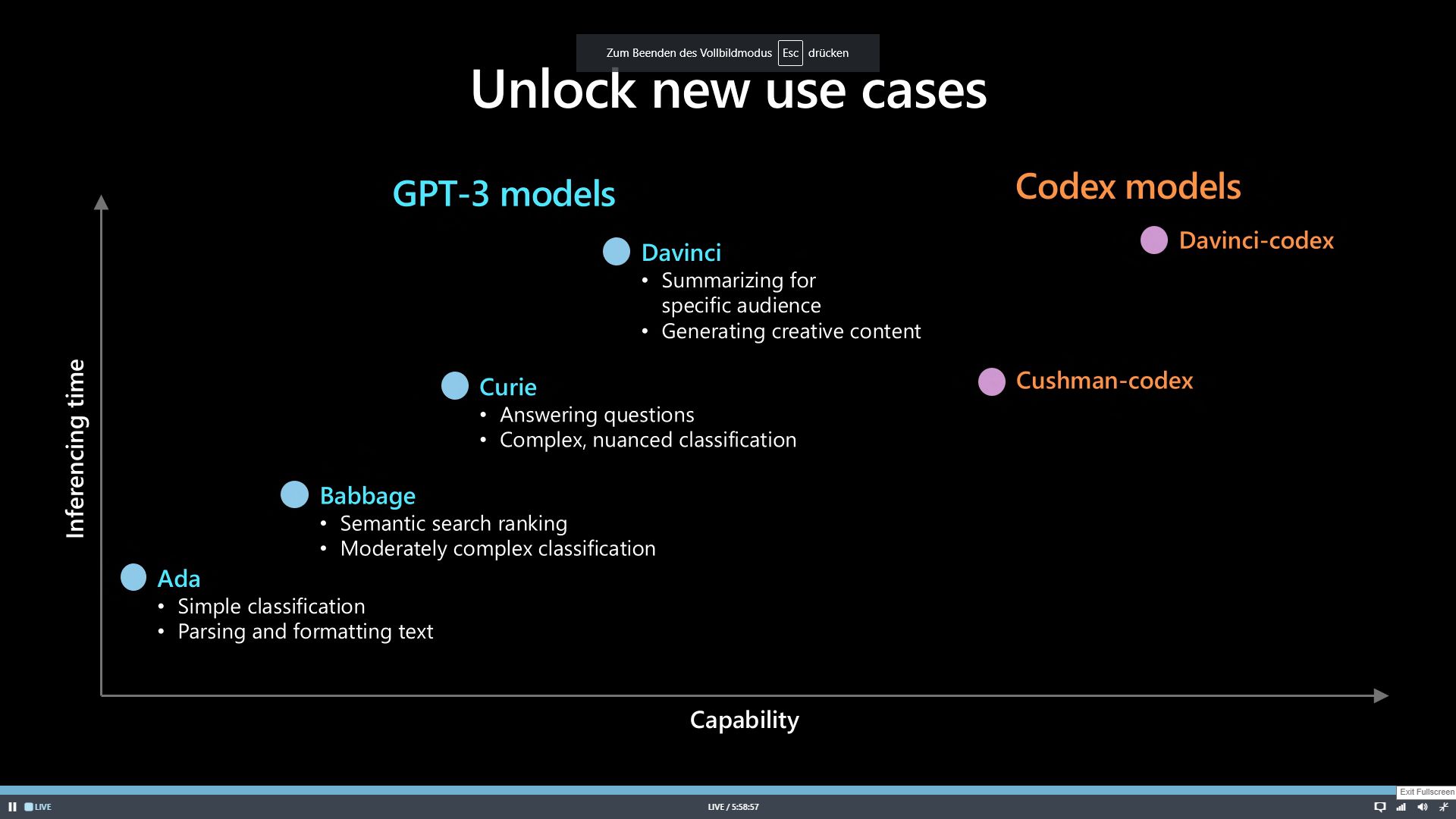

The last topic that I haven’t mentioned here yet – AI. Microsoft has been working with OpenAI since at least 2019 and is therefore building various AI services based on the GPT-3 model (see PowerFX at Ignite 2021). This year, the latest developments around Codex were presented, which is a successor to GPT-3. However, while GPT-3 focuses on natural language, Codex is all about … code. Codex is behind tools like the Github Copilot.

Not only code, but also its explanation

While the previous demos were based on someone describing a function and Codex generating code from it, Codex can now describe code the other way around. This is of course extremely useful for automatic documentation creation.

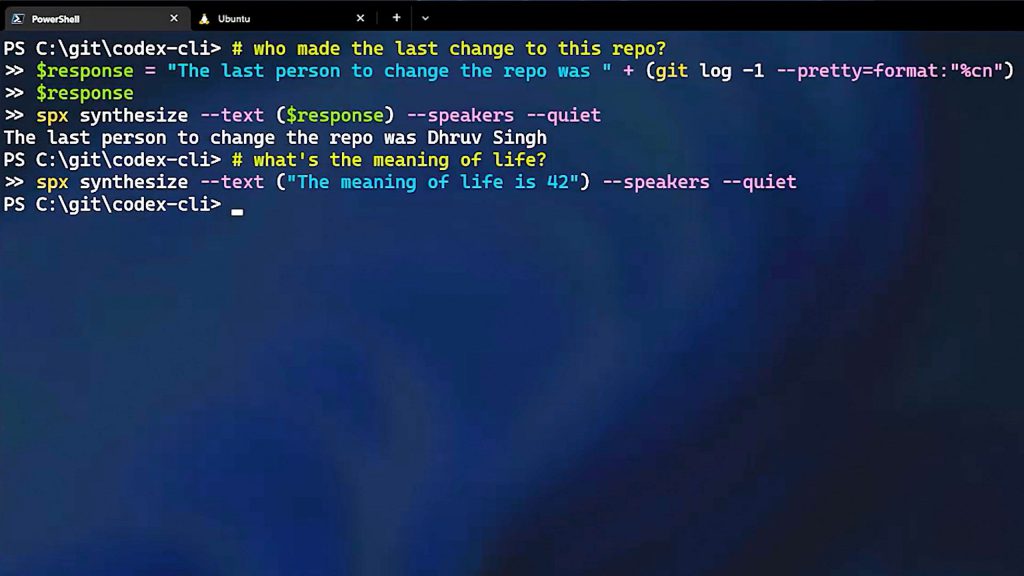

Codex CLI

A shell that can e.g. generate Powershell commands from text descriptions like “what is my IP”. Prerequisite is that you have an account at OpenAI.

You can find the CLI here.

Demo-Projects

A few demo projects that Microsoft has released on Codex:

- An extension for Minecraft that uses natural language to generate commands for its virtual counterparts – e.g. “go to the table”. (Demo)

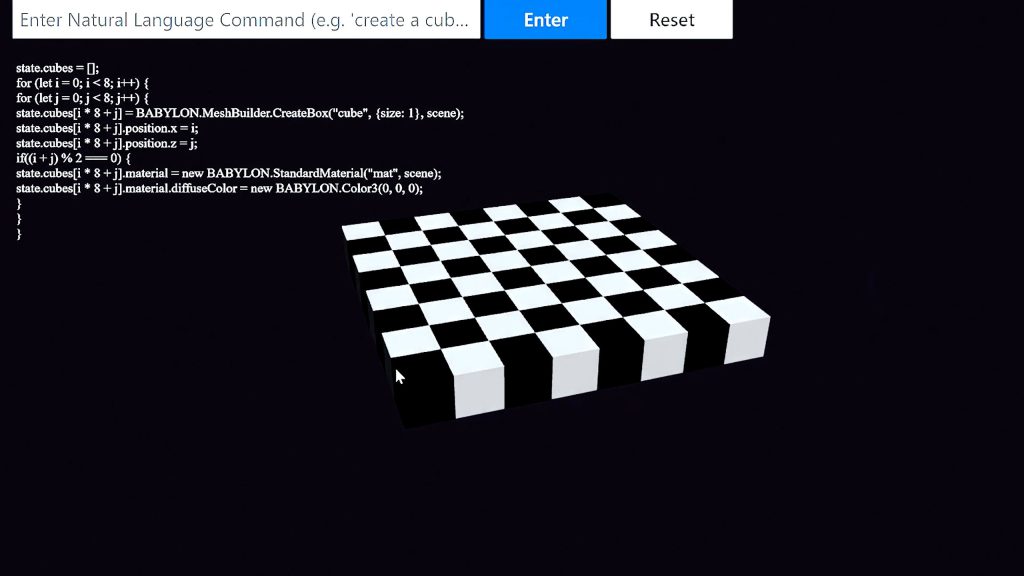

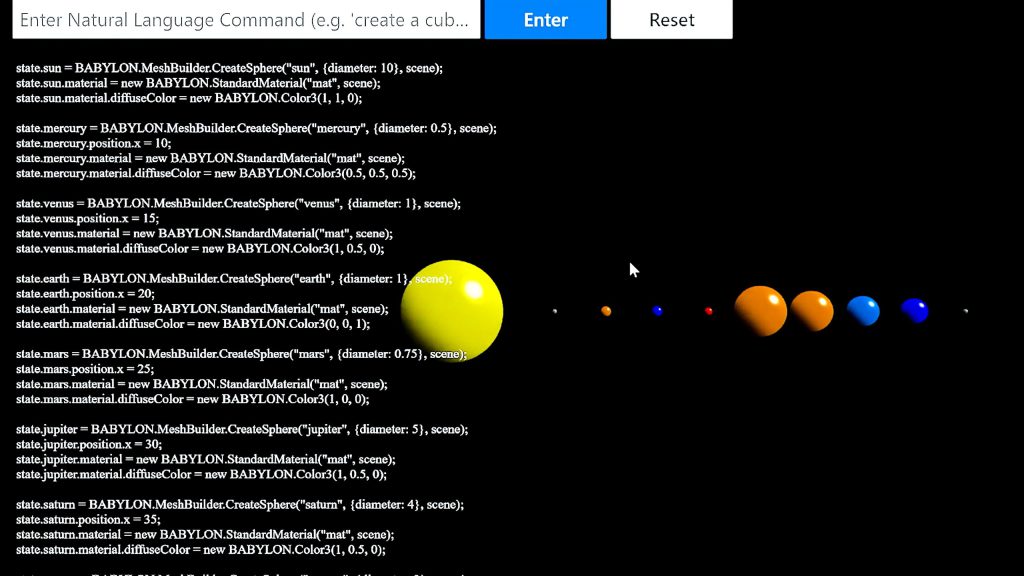

- Another very interesting use is the control of Babylon.JS (a 3D engine for the web based on Javascript). Codex controls with natural language what the designer would like to see and tries to map the wishes of the user from the known methods of Babylon like “color”, “draw area” etc.. In the demo, among other things, a planetary system and a chessboard are created using only cubes and spheres. (Demo)